An article from

Google, Meta, Microsoft and Snapchat said they would continue to take voluntary actions to protect their platforms following the expiration of the EU ePrivacy Directive.

This audio is auto-generated. Please let us know if you have feedback.

With the EU failing to come to terms on a new agreement over child safety protections, Google, Meta, Microsoft and Snap reaffirmed their commitment to protecting children and preserving privacy on their platforms.

On April 3, safety and reporting regulations contained within the EU ePrivacy Directive expired. Those protections included parameters that enabled online platforms to scan their services for CSAM material, which means that online platforms are no longer allowed to conduct this scanning under EU privacy laws.

The hope had been that EU regulatory groups would come up with an alternative to avoid removing these rules, which protect platforms from prosecution, based on regulations related to user privacy. But an amended clause could not be agreed to in time, meaning child safety measures have now been reduced in the EU.

On Saturday, Google, Meta, Microsoft and Snapchat issued a joint statement criticizing the removal of the laws. The statement also said the companies would continue to push to maintain the current protection systems.

As per the joint statement: “Today, because of the expiry of the ePrivacy derogation enabling the use of technology to detect child sexual abuse material (CSAM), Europe risks leaving children across the globe less protected from the most abhorrent harm.”

The companies said the removal of legal protections was an “irresponsible failure” on the EU’s behalf.

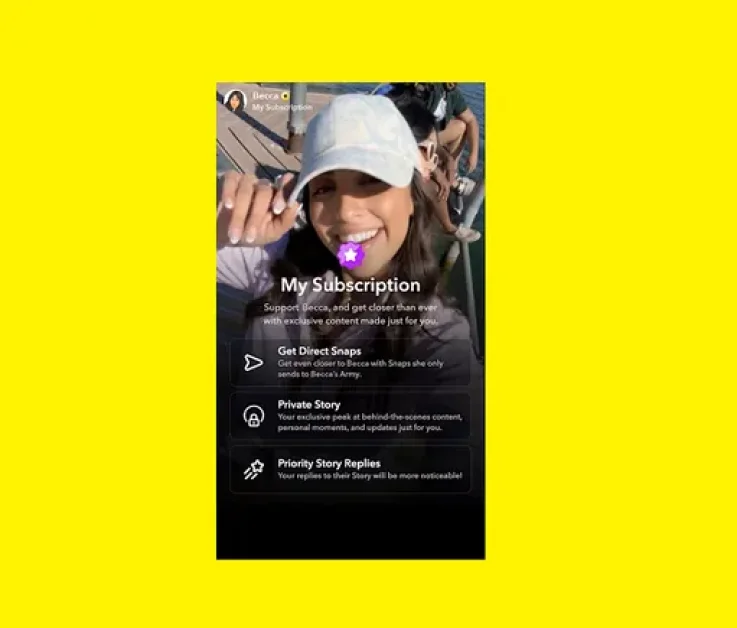

“As EU institutions continue to negotiate an immediate, interim solution and durable framework, signatory companies (Google, Meta, Microsoft, and Snap) reaffirm their continued commitment to protecting children and preserving privacy,” the statement said, adding that these companies will continue to take voluntary action on their services.

The prolonged disagreement could enable CSAM material to be transmitted without detection across digital apps.

The hope remains that EU regulators can come to an agreement soon, and ensure that this lapse doesn’t lead to broad-scale harm. But it’s a major concern, which clearly needs more urgent focus.